AI safety and privacy in education

Use these recommendations and examples to build policies that protect student data and support safe AI use across your district.

Build strong guardrails

Families, teachers, and school leaders deserve confidence that AI protects student data. Clear AI policies help schools safeguard privacy and keep systems transparent.

Hold partners to strong compliance standards

Work with vendors that meet established privacy and security requirements.

- Verify FERPA, COPPA, and GDPR compliance

- Confirm SOC 2 certification and regular security audits

- Establish clear Data Processing Agreements (DPAs)

Built-in protections for AI safety

Protect student information through safeguards built into daily workflows.

- Use content moderation and output monitoring

- Apply role-based access controls

- Maintain administrative oversight of tool capabilities

- Review data-sharing practices regularly

Put student safety first

Prioritize protections that keep students safe while maintaining transparency for families.

- Filter content to block inappropriate material

- Protect personally identifiable information (PII) with encryption and secure handling

- Clearly disclose how data is collected and used

- Provide opt-in or opt-out options for families

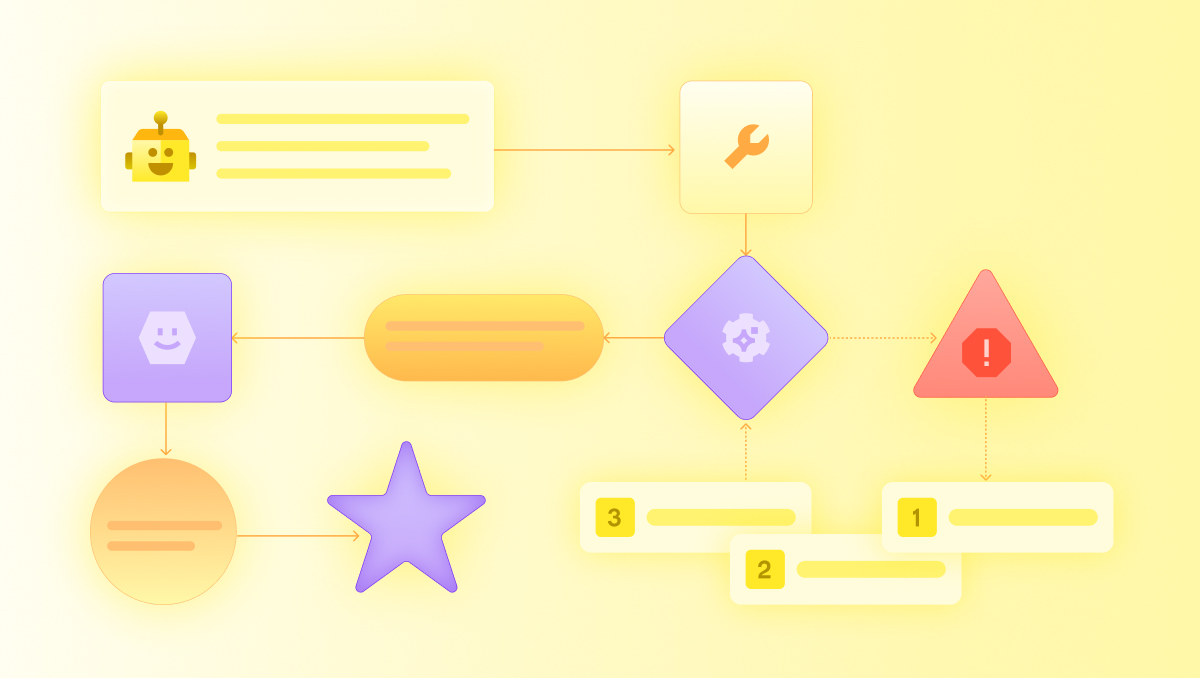

How MagicSchool supports AI safety and privacy

Safety and privacy are built into the MagicSchool platform. Key protections include:

- Compliance with FERPA, COPPA, SOC 2, and GDPR standards

- Tiered protection for personally identifiable information (PII), including encryption

- Role-based access controls for tools and features

- Content moderation, flagging, and audit tools

- Administrative dashboards for oversight and monitoring

- Custom Data Processing Agreements (DPAs)

More resources on AI safety and privacy

Explore national guidance and district examples that support responsible AI implementation

National guidelines

State examples

Learn more about AI safety and privacy in education

.jpg)