How K–12 districts ensure safe and effective AI adoption

Learn how districts can adopt AI responsibly while protecting student data, ensuring equity, and improving learning outcomes.

As AI becomes more present in K–12 classrooms, district leaders are navigating how to adopt AI so it’s both safe and instructionally sound. Safe and effective AI adoption means protecting students, preserving trust, and ensuring AI strengthens learning, rather than undermining it.

Here’s what responsible AI in education looks like in practice, and the conditions districts need to move from experimentation to confident, system-level adoption.

What safe and effective AI adoption means for K–12 districts

Safe and effective AI adoption in K–12 isn’t a one time decision or checkbox. It requires districts to continually balance two things at once: protecting staff and students, and making sure AI meaningfully supports both teaching and learning.

Schools need clear safeguards in place for the responsible use of AI in education. These include strong data privacy practices, compliance with student protection laws, secure systems, and guardrails that uphold academic integrity and ethical use. These protections help build confidence with educators and families.

At the same time, AI has to be useful in classrooms. It should align to standards, support student learning, and reduce the day-to-day workload that pulls educators away from teaching. When tools fit naturally into existing instructional practices, they’re more likely to be used consistently and well.

Focusing on only one side creates risk. Tools that are safe but disconnected from instruction often go unused. Tools that promise instructional gains without strong safeguards raise compliance and trust concerns. Districts that evaluate safety and educational value together are better positioned to adopt AI responsibly and at scale.

Building responsible use guardrails for AI in schools

Responsible use of AI tools in education starts with clarity. Districts need a shared framework that explains how AI supports learning and where its use should stop. In K–12 settings, that often means using AI for planning, differentiation, feedback, and scaffolding, while setting clear boundaries around completing student work or replacing core learning tasks.

These expectations should align with existing standards for academic integrity and plagiarism, helping educators and students understand the difference between support and substitution. How assessments are designed makes a difference. When students are asked to show their reasoning or apply learning in meaningful ways, AI can reinforce understanding. When tasks rely mostly on recall or surface-level responses, boundaries become harder to maintain.

Effective guardrails are clear, consistent, and easy to explain. They’re reinforced through professional learning and ongoing communication, so educators and students understand not just the rules, but the instructional intent behind them.

Protecting student data, privacy, and security

Protecting student data, privacy, and security is foundational to responsible AI use in education. For K–12 districts, this work starts well before AI shows up in classrooms and continues through policy, procurement, and ongoing oversight.

The following considerations are key when it comes to student security and safety.

FERPA, COPPA, and student data laws

Districts need to ensure AI tools align with federal and state student data protections, including FERPA, COPPA, and applicable state privacy laws. That means confirming student data is used only for educational purposes, stays under district control, and is never repurposed for advertising or secondary use. Clear policies around data access, retention, and deletion are essential for both compliance and trust.

Vendor evaluation and procurement

AI vendors should be reviewed with the same rigor as core instructional and enterprise systems. Districts increasingly look for clear answers on security practices, encryption, sub-processors, access controls, and incident response. Vendors built specifically for K–12, with transparent documentation and clear accountability, are better positioned to meet district needs than consumer tools adapted for schools.

Model training and data sovereignty

District leaders also need clarity around data sovereignty. This includes whether student or educator data is used to train AI models, where data is stored, and who ultimately owns it. Responsible AI in education requires explicit model training opt-outs and clear assurances that district data remains protected.

Strong privacy and security practices do more than meet requirements. They help build public trust and give districts the confidence to adopt AI responsibly while honoring their responsibility to students, families, and communities.

AI Professional development and teacher readiness

AI adoption in K-12 districts doesn’t scale safely without intentional professional development. When teachers are left to navigate AI on their own, usage becomes inconsistent, risk increases, and trust weakens. Effective districts invest in hands-on, scenario-based learning that reflects real classroom conditions. Educators need practical guidance on what AI can and cannot do, how to use it ethically, and how district privacy policies apply to everyday instructional decisions.

Just as important, professional learning should respect teacher autonomy and instructional judgment, positioning AI as support rather than a mandate or a monitoring tool. When educators are prepared, confident, and supported, AI provides consistency, safety, and instructional impact.

AI education platform pilot design, evaluation, and improvement

Well-designed pilot programs give districts a safe way to explore responsible AI use in education without committing to full-scale adoption too early. They help district leaders see what works, where adjustments may be needed, and how AI fits into real instructional and operational contexts.

Before launching, districts should define what they’re trying to learn, who the pilot is for, and what success looks like. Pilots work best when they’re limited in scope, time-bound, and aligned to specific instructional priorities, such as lesson planning, feedback, or differentiation. Clear guardrails around data use, academic integrity, and acceptable use should be in place from the start.

AI adoption pitfalls to avoid

As districts explore the responsible use of AI in education, a few common obstacles tend to slow progress or introduce unnecessary risk. One is getting stuck in ongoing pilot programs without clear goals, success criteria, or a path to scale. Another is tool-first adoption, where AI is introduced without clear alignment to curriculum, instruction, or district priorities.

Other issues show up when academic integrity isn’t actively monitored, privacy and security considerations are addressed too late, or pilots stay fragmented across schools and departments. The result is often a lot of activity, but very little insight districts can act on.

Gaps in professional learning can also lead to teacher fatigue, especially when educators are asked to experiment without clear guidance or support. After all, the earliest adoption conversation should start by ensuring potential skeptics understand the risk of AI in education without guardrails.

Districts that see the strongest results take a more proactive approach to the inherent risks of AI in education, setting clear expectations, shared guardrails, and defined pathways from pilot to broader adoption so AI supports learning rather than adding complexity.

Ensuring AI equity and access across districts

AI adoption in K-12 districts can either help close opportunity gaps or unintentionally widen them. The difference comes down to how intentionally districts plan for access and oversight. Equitable adoption means ensuring all schools have consistent access to approved tools, reliable devices and connectivity, and protected time for professional learning and coaching.

From an instructional perspective, AI can be a meaningful support for differentiated instruction, multilingual learners, and students with disabilities when its use is guided, developmentally appropriate, and aligned to IEPs and language supports. Districts play an important role in monitoring equity by looking at how tools are used, where impact shows up, and which schools or student groups may need more support.

With clear visibility and the ability to step in when needed, district leaders can help ensure AI expands access to high-quality instruction rather than reinforcing existing disparities.

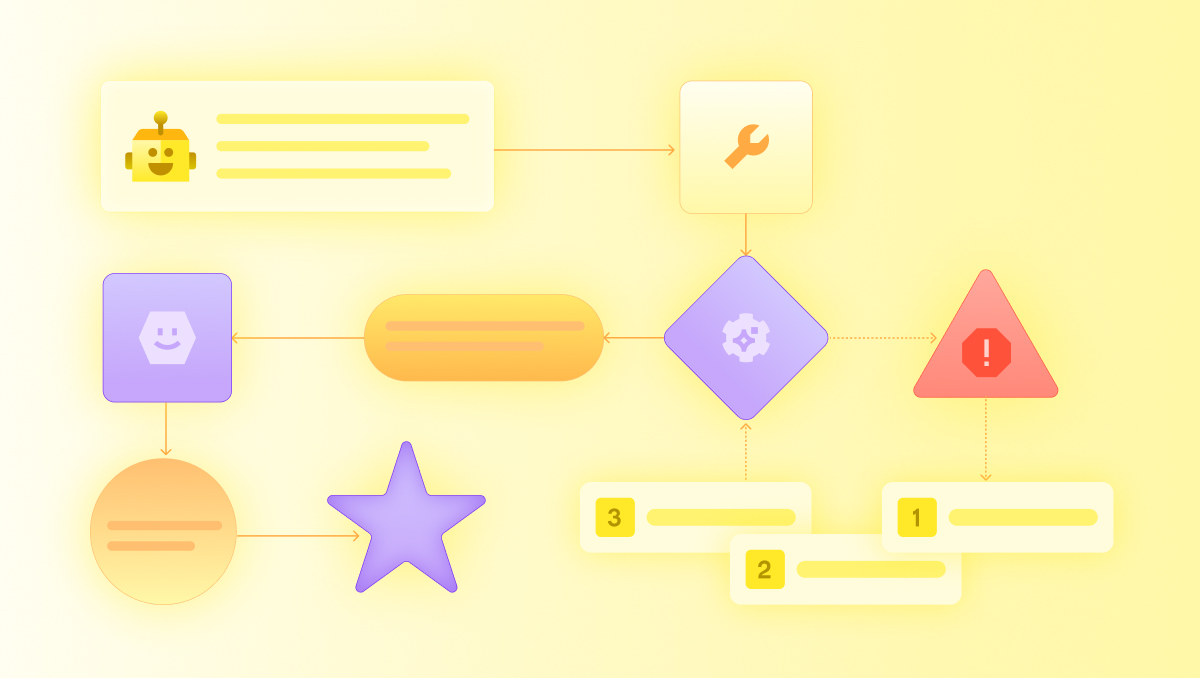

How MagicSchool can help

Safe and effective AI adoption takes more than good intentions. It requires clear guardrails, strong instructional alignment, meaningful professional learning, and trust built over time. MagicSchool was designed to support districts across all of these areas.

With enterprise-grade security, strong privacy protections, and built-in guardrails for responsible AI use in education, we help districts protect students while making sure AI delivers real instructional value. From pilots and professional learning to system-level adoption, we support districts in implementing AI in ways that align with curriculum, policy, and community expectations.

Ready to take the next step? Book a demo to see how MagicSchool supports safe, effective AI adoption at scale or download our District Guide to Responsible AI Adoption to deepen your research and build a shared foundation for confident decision-making.

What makes AI adoption ‘safe’ in K–12 schools?

Safe AI adoption in K–12 schools means AI tools meet student privacy standards, align with district policies, and support responsible classroom use. Districts should evaluate how tools handle student data, support instructional goals, and provide safeguards for educators and students.

How do districts evaluate instructional efficacy of AI tools?

Districts evaluate instructional efficacy by determining whether AI tools improve teaching practices, support student learning, and align with curriculum goals. Many school systems review classroom usability, teacher adoption, instructional quality, and alignment to district priorities before scaling implementation.

Why does AI adoption require privacy and compliance checks?

Privacy and compliance checks help ensure student data is protected and AI tools meet federal, state, and district requirements. District leaders should review vendor policies, data handling practices, and security standards before approving classroom implementation.

What role do teachers play in successful AI adoption?

Teachers play a critical role in successful AI adoption because they determine how tools are used in real classroom settings. Educators help ensure AI supports student learning, aligns with instructional goals, and is implemented responsibly and effectively.

.jpg)